AMD 0.00%↑ deep dive here. Enjoy.

1.0 AMD´s “Secret Recipe”

2.0 Pensando and Xilinx: Towards Pervasive AI

3.0 From CPUs to GPUs

4.0 Future Applications, China and the Macro-economy

5.0 Business Segments and Financials

6.0 Thoughts on Taiwan and Conclusion

I write at least one deep dive per month, usually on technology companies. To receive them in your inbox for free, click below to subscribe!

1.0 AMD´s “Secret Recipe”

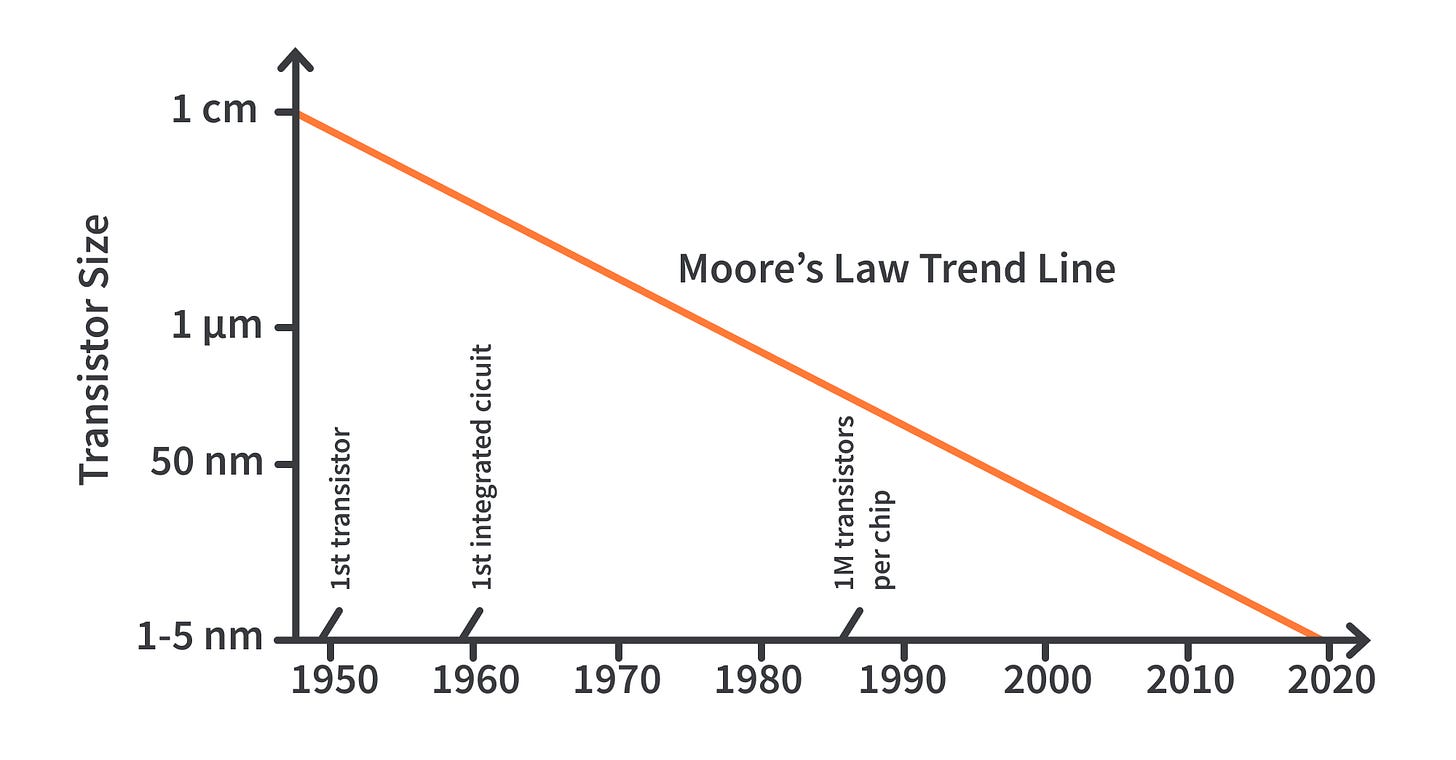

AMD is leading the way around Moore´s Limit.

A question that many are asking themselves is, how did AMD turn around successfully? Much like Tesla, this has been a company that has persistently surpassed expectations, but the market has not quite been able to put a finger on just how it has been able to do so. I have been a shareholder in the company since 2014 and every time the stock pulls back aggressively, which it does often, current and prospective investors vigilantly ask themselves, does the company still have it, whatever it is? In this section and throughout the deep dive, you will gain a fundamental understanding of the company and its “secret recipe”.

Moore´s Law predicts that “the number of transistors per device will double every two years”, as indeed the transistors themselves get smaller. He was right when he wrote his original paper in 1965, but as we head beyond the 5nm domain, complexity is skyrocketing. In effect, per the way semiconductors are made, the industry is running up against the litographic reticle limit, whereby producing cutting edge chips is getting exponentially more expensive and even physically impossible. This dynamic has been silently re-shaping the industry for almost a decade now and the broader market is only starting to realize it.

What is even more amazing, is Moore predicted this trend in his original paper too:

“It may prove to be more economical to build large systems out of smaller functions, which are separately packaged and interconnected. The availability of large functions, combined with functional design and construction, should allow the manufacturer of large systems to design and construct a considerable variety of equipment both rapidly and economically.” – Gordon E. Moore

The name of the game in semiconductors is to deliver more computing power for less - less cost and energy consumption. The industry has thus far strived to satisfy this equation by creating monolithic chips, by cramming increasing amounts of computing power into one chip. AMD, however, has been pursuing the chiplet route for roughly the last decade which to many, including Intel, seemed like a really silly idea at the outset. As we move towards the litographic limit and monolithic chips look increasingly like a dead end, this perception is quickly lessening.

In essence, chiplets are able to yield almost the same performance as the top monolithic chips, but at a 40% discount because if something goes wrong in production, you do not have to throw the whole chip away, but rather just one or a few chiplets. Thus, yields are much higher and across the board, so is organizational agility . This has translated into AMD giving Intel a really hard time in the CPU space and into the company inexplicably reaching new highs, quarter after quarter.

The corporate DNA/culture that goes into producing chiplets successfully is totally different to doing so with monolithic chips: it not only requires but further enables a company to embrace a level of agility that monolithic players do not have quite so much of. We know well that in a rapidly changing world, this is an invaluable quality and it will be even more valuable going forward, as the industry moves towards gen 4 datacenters and beyond the 5nm domain.

Both Intel and Nvidia are now moving towards chiplets, but AMD has been at it for a ~decade. The countless business processes, cultural elements and collective wisdom AMD has developed during this period will serve it well going forward. This is yet another text book example of the “Innovator´s Dilemma”. Along comes a non-incremental innovation that gradually puts the industry on its head, with incumbents being dismissive in the earlier stages, only to pivot when the previously un-menacing contender is now a freight train.

For AMD to pursue this strategy circa 2014 when very serious and intelligent people thought it was a terrible idea, it required an additional set of extraordinary corporate properties that become very apparent if you listen to Lisa Su talk for 10-20 hours and that persist to this day:

Management actively ensures that everyone in the company feels connected to the mission and feels like they have a real opportunity to make an impact in the industry.

Employees are empowered and have plenty of freedom.

There is a focus on what Su terms “extreme communication” and employees run toward problems (transparent information flow, specially for bad news).

AMD had to overcome a series of daunting technical challenges to make chiplets a reality and so, the whole picture just screams world class management and culture.

Intel and Nvidia are great companies too, but specially so in the case of semiconductor designers, success is not only built on excellent corporate properties, but rather in combination with a successful tech roadmap. In essence, a company like AMD is just a bunch of people working together to design new chips - all they do is process information (electrons) to unlock novel atom configurations that yield some kind of benefit, but someone has to decide what the strategy is for the next 5 years. The company has to make the right tech bets.

In fact, the main reason the company flopped around so much pre-Su is that it was not making the right bets.

What we have seen thus far is the result of the roadmap that Su (and others that take less credit for it) put in place years back. It denotes an ability to allocate capital well and this is perhaps the most important attribute of the company. Pursuing the chiplet route was an excellent, contrarian decision. Now we have the same capital allocators, with way more resources at their disposal, leading the way around Moore´s Limit. The cards are still very much on the table for AMD - which brings me onto the next section.

2.0 Xilinx and Pensando: Towards Pervasive AI

Semiconductor companies are no longer about just C/GPUs. They are about moving electrons around and generating insights, for themselves and for others, making AI something pervasive across our world.

The market is even more puzzled about why Su spent $49b in stock to acquire Xilinx, and just under $2B in cash to acquire Pensando. That is because the market currently thinks of semiconductor companies as being predominantly concerned with CPUs and GPUs, because it does not think about computation at a fundamental level. The point of computation is to move electrons around and unlock insights. As more and more things get connected to the internet, in order for C/GPUs to drive incremental productivity they must sit in a highly connected and self-optimizing environment: the stateful and smart data center.

Some context.

If you have been reading me for a while, specially my Tesla deep dive, you will know that I believe that many problems are increasingly networking problems or a function of how well we can manage electrons. For the next few decades, most of the economy will be about collecting data from endpoints and processing it (training an AI) to yield insights (having the AI make inferences), that can then drive value. This will redefine our economy (it will run on inferences), as we go from being terrible at making predictions to being great at making them. The Xilinx and Pensando acquisitions set AMD up very well for this future.

Starting with Pensando, the company excels at making datacenters stateful, which is the basis for AMD´s next chapter in that it provides the environment for their products to self optimize and for the rest of the world to run Industry 4.0 infrastructures. Statefulness abstracts away the complexity that gen 3 data centers brought upon us. Let me explain.

Data centers have been evolving for decades and until 2010 roughly, traffic was predominantly north-south and it would run on bare metal: a request would come into the data center for information and it would respond with a package. Since 2010, with the advent of gen 3 datacenters, applications (and servers) got virtualized, in that they got broken down into a number of services. The application logic now sits in one virtual machine, the database in another and so forth: micro-services as AWS terms them. This brought about a new kind of traffic, via which information would now flow among different components of the data center, which skyrocketed the complexity of managing them: east-west.

Statefulness kills the complexity, so that “anyone” can become a hyperscaler.

When a data-center is stateful it means that information about itself flows around totally offloaded from C/GPUs, providing no extra work for them. As the data-center deals with north-south and east-west workloads, it generates this data which can then be fed into ML algorithms in order to train them to autonomously run all sorts of applications, ranging from XDR to analytics and microsegmentation. Like so, AMD is now able to provide the environment for their computing units to operate in the environment that their customers need to be running for the next decade or two.

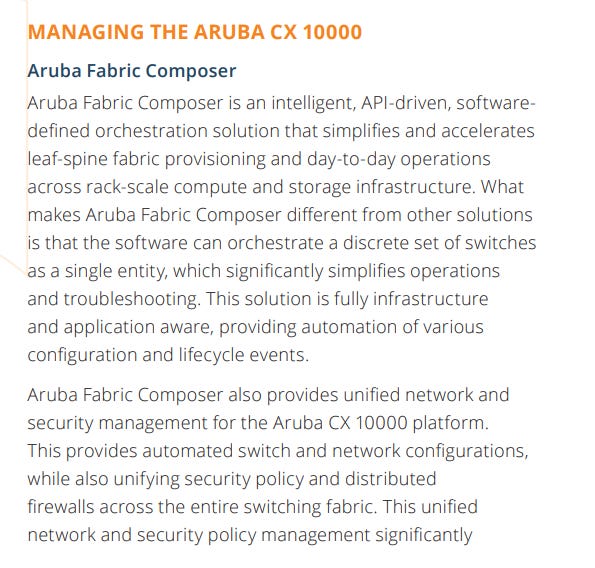

This is seemingly facilitated by Pensando´s hardware (DPU, data processing unit), but is actually mostly enabled by Pensando´s Stateful Software Services, which in turn seems to be based on the Aruba Fabric Composer:

“… the software can orchestrate a discrete set of switches as a single entity.” - Pensando

“But seriously, with Pensando, it is not just the hardware. Around 90 percent of the engineers at Pensando are software engineers. I don’t think people realize that. I mean, they’ve got a complete, hardened enterprise and cloud stack that covers everything.” - Forrest Norrod, Senior VP at AMD

Now, where do Xilinx and FPGAs come in? FPGAs are like ASICs (application-specific integrated circuit, a chip customized for a specific use), but you can configure them on the go - one FPGA can turn into any ASIC you want with a little bit of code, versus an entire manufacturing process. ASICS and by extension FPGAs are far better than GPUs at making inferences from AI models. So you can litter other computing units with FPGAs to equip them with inference capabilities.

Every deep learning (AI) model consists in linear algebra on the way forward (inference) and multivariate calculus on the way back (learning). Whilst an FPGA maybe cannot deal with multivariate calculus as well as a GPU, it can excel at accelerating linear algebra. Different deep learning models perform different linear algebra computations and so, you want a computing unit that can morph accordingly at a marginal cost, as you move from working with one model to another. Only FPGAs can do that and Xilinx is the clear leader in the field.

*You can learn more about how AI works in my post Artificial Intelligence 101

Accelerating linear algebra at marginal cost in an automated way: who cares? Going back to my definition of what the economy is going to be about for the coming decades, inference will be a really big part of it if not most of it - AI will be pervasive. Once we train an AI model with data, it can only be useful to us if we are able to make inferences with it at a cost-effective price. FPGAs are set to take over GPUs (and ASICs) on the inference side of the equation and when running on gen 4 datacenters, they can make two types of inferences:

Endogenous: concerning the various components of the data-center, including C/D/GPUs, enabling them to get smarter.

Exogenous: concerning data that involves entities that are not an integral part of the data-center (applications running on it).

Think about this for a second: a CPU running on a datacenter, learning how to get better from the inferences that an FPGA attached to it is making: a CPU with an FPGA infused into it taking over half the job of a GPU in the AI domain. Do you see where this is going? With these acquisitions, AMD is no longer about just providing the best CPUs and GPUs (addressing this in the next section), but about providing the environment in which they can excel and can be mixed and matched to optimally address the needs of their customers. It is also set up now to become an inference giant, which has vast implications for the business going forward.

In this sense, AMD´s experience in connecting different computing units in the form of chiplets (via AMD Infinity Fabric) will be a key facilitator for this next chapter, by enabling it to configure products in a highly tailored manner. In fact, AMD has announced that it will “infuse its CPU portfolio with Xilinx's FPGA-powered AI inference engine, with the first products slated to arrive in 2023”. Monolithism is getting rapidly antiquated in the semiconductor industry.

There are now two further considerations that are note worthy, building on what I have explained above. Firstly, we are going to see an explosion in unstructured data over the next decade and secondly, the datacenter´s intelligence will extend to the edge. In effect, we are going to have many objects connected to the internet and they are going to be running inferences on their own too, working their way through data that is sometimes static images, sometimes videos, words or numbers. This is going to require, much like in gen 4 datacenters, different types of computing units to be connected in the same device.

AMD´s achievements in the past 8 years may look like a little snack in 10 years time.

“Our largest opportunity is in AI, and we've already started executing new hardware and software roadmaps to capture the significant opportunity we see to drive pervasive AI across cloud, edge, and endpoints. In summary, our work over the last several years has placed AMD on a significant growth trajectory.

We remain laser-focused on executing our product and technology roadmaps, further deepening our customer relationships, and investing strategically across the company to drive our next phase of growth across the $300 billion high-performance and adaptive computing markets.” - Lisa Su, AMD CEO @ Q2 2022

3.0 From CPUs to GPUs

Chiplets are now moving towards GPUs, or viceversa.

AMD has thus far succeeded at taking market share from Intel due to its chiplet strategy. As recently as 2017, Intel was actively discrediting the strategy so whilst not impossible, it will likely take them some time to catch up. Either way, I am fond of the idea of buying Intel stock for their upcoming foundries, which is possibly one of the better hedges in case things go wrong in Taiwan. If AMD messes things up, you get some very nice exposure to semi production and CPUs. Else, it is a win-win and specially at these rather prudent prices.

The GPU space presents a more interesting picture, with players clearly moving towards the same approach that AMD has championed in the CPU space. AMD has not launched GPUs based on their chiplet architecture yet, but the company has been cross-pollinating their CPU and GPU divisions for some time now and it will be launching its first chiplet-based GPUs in early 2023 (RDNA3). If this operating is as well managed as the rest of the company, it is likely that the GPU division will inherit the properties that enabled the CPU division to succeed.

“From our point of view, we had the CPU engine in hand, and that’s going well. We have the GPU engine in hand, but it is two to three years behind the CPU and executing against a more capable competitor, but executing the same fundamental strategy. When we lifted our eyes up from the compute engines, the next thing was pretty clear: network acceleration and infrastructure acceleration.” - Forrest Norrod, Senior VP at AMD

“The semiconductor industry is near the limit” - Jensen Huang

Tesla has chosen to pursue the chiplet route to train its various AIs. The way Dojo is set up maximizes the useful runtime of every D1 GPU chip when running a neural network on it, versus existing GPUs that spend a lot of time lingering idle dealing with memory workloads. This seems to be due an ingenious way of connecting the tiles and spreading workloads among them, similar to AMD´s Infinity Fabric. The results are quite astonishing, although bear in mind that this is for AI workloads only, whilst GPUs serve other markets such as gaming and crypto.

“… we started with hardware design that breaks through traditional integration boundaries.” - Tesla AI Day 2022

Musk: “So, the jury is out on Dojo. Dojo team thinks they can outperform NVIDIA for neural training. Dojo is out.”

Nvidia on the other hand has been pursuing the “super-chip” route, which essentially consists in putting a bunch of big chips together. This can no doubt yield great performance, but my view is that as we continue to move down the nanometer curve this approach can somewhat lead to a relative dead end. Of course, the people at Nvidia are not dumb and they will likely find a way forward, but this currently requires pivoting the organization to a more modular way of thinking. NVLink is Nvidia´s proprietary technology to connect computing units, so it will be interesting to see if it can scale as well as AMD´s Infinity Fabric.

Nvidia merits a deep dive on its own here.

Although I do believe that FPGAs will take over GPUs in AI inference, I believe that GPUs will very much remain relevant in AI training. As AMD moves towards chiplet-based GPUs and combines them with FPGAs, it can do a lot of damage to Nvidia, as it gets a hold on both training and inference. AMD, thanks to Infinity Fabric, will then be able to infuse its products with this capabilities, notably CPUs and DPUs, which will probably create even more damage.

I currently own AMD and Tesla (my first two investments ever since 2014), so apart from reviewing AMD´s potential for the next 10 years and whether continuing to hold it makes sense, I am looking into whether Nvidia is a worthwhile addition to the portfolio. I will be likely diving deep into the company soon.

4.0 Future Applications, China and the Macro-economy

If you deeply understand computing´s role in our civilization, China and the macro-economy are just little bumps in the road.

There is much concern in the market about the export ban to China, which I do not share. Firstly, I believe that sharing too much technology with China whilst the CCP is in power is not a good long term strategy. They will eventually use the technology to impose their own values and preferred lifestyles on us and living in Europe myself, I have had quite enough of that from 2020 to date. I am very happy to sacrifice some short term paper gains in this context. Secondly, the demand for computing in 10 years time will be exponentially higher than today, with or without China, regardless of how strong the economy is.

This was one of the main premises for my AMD thesis in 2014 and it remains so today. So long as AMD continues to provide more computing for less in the competitive realm and be well managed, the stock will continue to go up in the long term. Per my natural curiosities, that I often expose here, I have a second row seat to view how the industries that will generate a lot of that exponential rise in computing demand are evolving and I can tell you, that perhaps we need a new word to define something that is beyond exponential.

Among them, I believe synthetic biology will be the #1 driver of computing demand. The general public is not yet aware of what syn bio implies, but currently the world´s total computing power is not enough to satisfy 0.1% of its potential. It will lift humanity out of material scarcity and it will cure many diseases that today are incurable, totally redefining what it means to be human.

I believe that in 20 years time, pretty much everything that is not totally based on empathy and the highest realms of creativity will be a matter of moving electrons around and making inferences, including biology. We live in a universe in which there is no material scarcity, just a lack of insights in terms of how to unlock the abundance that is around is. That is a game for AI and it is very likely that it will continue to run on AMD´s computing units, because the company is making the right bets.

I entered the stock at $4.2, when nobody wanted it (as is the case with my other, newer investments today), so I am in a more comfortable position in this bear market than otherwise. However, what got me here in the first place was thinking long term and following the fundamentals. There is nothing different about this occasion.

5.0 Business Segments and Financials

The best thing about the Xilinx and Pensando acquisitions is that they do not only position AMD to be an HPC leader, but they have not put the company in jeopardy. The company remains in great financial health.

Until the Xilinx and Pensando acquisitions, AMD offered the following:

CPUs: for servers (EPYC) and for consumer products (Ryzen).

GPUs: for servers (CDNA) and for consumer products (RDNA).

*servers = cloud, enterprise, consumer products = things that end consumers buy, like laptops and game consoles.

Generally, so long as these products continue to rapidly improve their computing performance per watt, the company will tend to do well financially. AMD focuses on the HPC (high performance computing space) and so the actual performance of the product has a relatively higher weight for their customers than price. However, total cost of ownership is still one of the key selling points in HPC and delivering more computing for less power (watts) is the way to go.

To give you a sense of how AMD operates in this sense, RDNA2 had 50%+ higher performance per watt than its predecessor RDNA, with both running the same 7nm nodes. The company is now targeting another 50%+ increase in performance per watt for RDNA3 (5nm nodes) and by continuing this path ahead of its various competitors, is how AMD gets to deliver exponentially more computation at a reasonable price in the not so distant future. This rate of improvement is palpable (CPUs, GPUs) across the product portfolio.

Fundamentally, their ability to interconnect different computing units makes it all the more likely that the company will experience continued success with this metric going forward. This is because now, as explained in section 2.0, compounding performance per watt through time is not only a function of improving the C/GPUs themselves: it is now also about being able to infuse them with accelerators (FPGAs) and place them in an environment where they can learn to optimize themselves, better than any human chip designer could. All this for the right markets at the right time (agility).

The accelerators themselves have to be able to keep up with the pace of learning and morph accordingly, which is why the Xilinx acquisition is key: it gives AMD the superpower to generate ASICs on the fly at a marginal cost, as the smart infrastructures based on Pensando´s DPU and stateful software services increasingly turn the management of data centers and of the edge into an electron management problem. This will yield an added moat that competitors will have a tough time catching up with, unless the develop similar ecosystems. The financials we see today do not even begin to reflect this future.

To be clear, it is not like Intel and Nvidia cannot connect computing units or move towards gen 4 datacenters themselves and as discussed above, they could both be great investments on their own, but:

In 2015, Intel acquired Altera to gain access to its FPGA portfolio. It then announced a CPU infused with an FGPA in 2015, the actual chip did not arrive until 2018 and after that, the project apparently came to a dead end. The company has not announced a similar initiative since.

In 2020, Nvidia bought Mellanox for $7b to gain access to its DPU technology. Having looked deeply into it, however, I cannot seem to find technology analogous to AMD´s Aruba Fabric Composer (that enables them to operate all the PCIe port as one and fully offload services from HTTP in the datacenter) and so I have serious doubts that they can fully yield gen 4 datacenters.

The above are just a few lose datapoints of the many that I have picked up, that overall make me feel like AMD is currently the ultimate intersection of the right technology portfolio and the optimal culture for an increasingly modular and yet holistic, interconnected environment in the semi conductor space: I believe that as a result, the financials will continue to follow.

Income Statement

Following the acquisitions, the company has now re-arranged its business segments as follows:

The data center segment includes data center CPUs and GPUs, Pensando, and Xilinx data center products.

The client segment includes desktop and notebook PC processors and chipsets.

The gaming segment includes discrete graphic process and Semi-Custom game console products.

The embedded segment includes both AMD and Xilinx embedded products.

Q2 2022 was the first quarter in which the financial statements included Xilinx´s financial results and AMD closed the acquisition of Pensando during the quarter too. At the very least, the Xilinx deal seems to have not hurt AMD´s I/S, except for $616m in amortization of acquisition-related intangibles that is impacting the I/S in the short term and the corresponding dilution for shareholders, which I believe is nonetheless for the better.

Short term, it looks like the company is also in for some weakness on the consumer side of things, but it currently does not change my long term view of the company.

“… revenue was $6.6 billion, up 70% from a year ago, driven by higher revenue across all segments and the inclusion of Xilinx revenue. Gross margin was 54%, up 640 basis points from a year ago, driven primarily by higher data center and embedded revenue.

Operating expenses were $1.6 billion, compared to $909 million a year ago as we continue to scale the company. Operating income more than doubled from a year ago to record $2.2 billion, driven by significant revenue growth and higher gross margin.” - Devinder Kumar, AMD CFO @ Q2 2022 ER

All throughout the turnaround, the company has been scaling its operating expenses quite sensibly and the last quarter is no exception, despite the acquisition, with the operating margin hitting 30%, up from 24% a year ago. Xilinx is no little toy with just under 5,500 employees (versus AMD with just over 15,500), so operating income / margin is worth watching going forward.

The one qualitative factor that will drive this metric as the integration takes place and then the materialization of the vision that I outline in section 2.0 is the cultural fit of the three organizations, which Lisa has previously publicly commented on. If you listen to her interview that I linked to in section 1.0 starting at minute 8:31, you will learn a lot about how she manages the company and how she thinks about acquisitions in general, beyond the technological aspects.

There are not that many managers that understand what management really is at a deeper level and I find betting on people like that far more important than going crazy looking at numbers. I highly recommend reading Loonshots, The Innovator´s Dilemma and Influence (by Robert Cialdini) to learn about this. If she got the cultural fit right this time, things will go well and else, probably not so well. Tracking this will tentatively enable you to front-run the financials.

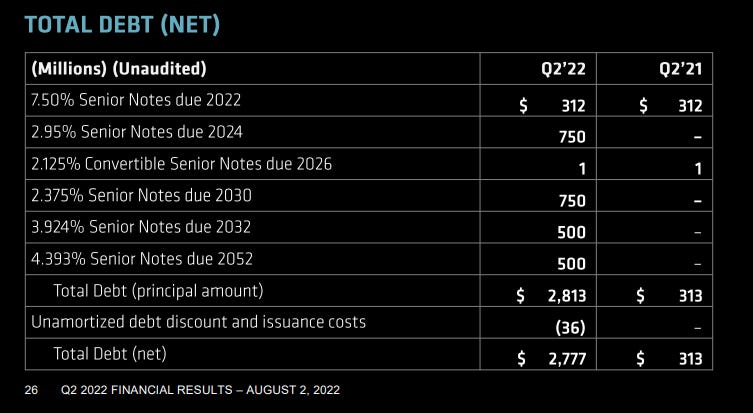

Balance Sheet

The company ended Q2 2022 with $2,777M in debt, having “established AMD in the investment-grade debt market by issuing debt of $1 billion.” AMD also has $5,992M in cash, so whichever way you look at it the balance sheet remains very strong, together with the strong cashflows explored in the next subsection.

Inventories are ticking up fast, “up approximately $220 million from the prior quarter in support of second-half revenue and the inclusion of Pensando”. One thing I think that the general public is not that aware of is the inevitable cyclicality of the semiconductor market. If you look at Nvidia´s stock price in 2018, for instance, it took a nose dive because it over-stocked - semiconductor history is full of instances like that.

Such is the nature of this market and like any other one, attempting to time it is often futile. The actual hedge is betting on great talent, management and a superlative tech roadmap.

Regardless, always good to keep an eye on inventories.

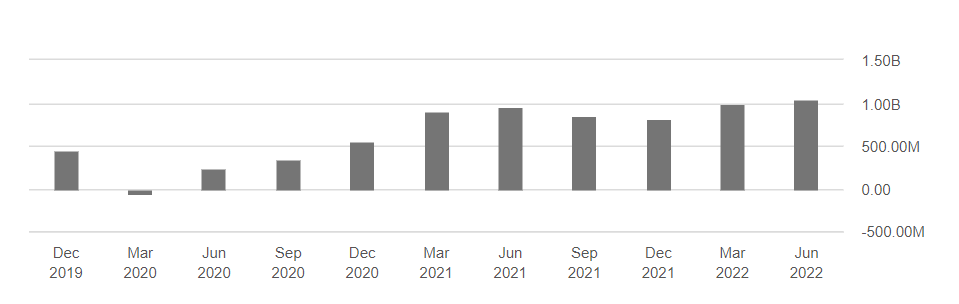

Cashflow Statement

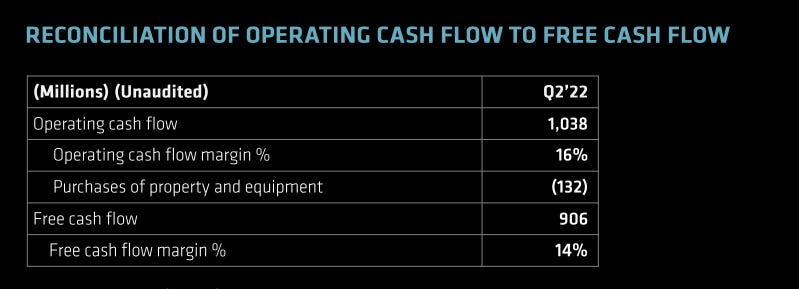

“Cash from operations was a record $1 billion. Quarterly free cash flow was $906 million, compared to $888 million in the same quarter last year. Inventory.” - Devinder Kumar, CFO

The overall cashflow statement continues to healthily track top line growth. With no signs of OPEX getting out of hand, this will likely remain healthy.

6.0 Thoughts on Taiwan and Conclusion

AMD is perhaps one of the world´s most important assets at this time.

AMD only designs chips and TSMC makes most of them. TSMC makes 90% of the world´s advanced semiconductors in the entire planet, but it is located in Taiwan and China is particularly keen to reign the island back in. My main thought here is, this is an Operation Warp Speed 2.0 in the making. Our entirely civilization relies on chips today and if Taiwan gets invaded, which is by the way looking less likely after Russia´s experience in Ukraine, it would not only encumber AMD stakeholders but the entire world.

In such an event, the world will literally scramble and put all its resources to instantiate further fab capacity and eventually fix the problem. Along the way, (semi) stocks will crash and the world would probably enter a depression, but whatever resources the world has will go to chips, just like it went to synthetic biology during 2020-2023 (and beyond). Further, the US government will undoubtedly be interested in retaining its chip supremacy, so we can probably infer what it would do too. Under the assumption and indeed probable truth that chips are existential assets, I am happy to continue holding the company for the long term, even through a potential invasion of Taiwan.

Further, after this current downturn, far from being discouraged I am now more clearly seeing where the world is heading and the impact that AI is going to have in the world. At a P/E ratio of 17.47 and P/FCF ratio of 26.4, with a strong balance sheet, torrential cashflows and a world class management and tech roadmap, this company is more than reasonably priced. The AMD we have seen since 2014-2016 is a toddler and now it is moving onto its adolescence, poised to be one of the world´s most important companies in 5 to 10 years time. Being a holder since $4.2 per stock, I am more than excited to see this play out.

Until next time and as always, stay fundamental.

⚡ If you enjoyed the post, please feel free to share with friends, drop a like and leave me a comment.

You can also reach me at:

Twitter: @alc2022

LinkedIn: antoniolinaresc

Antonio is setting himself up to one-day be a world famous investor, if his logic plays out with his other investments and write ups. Very well explained, thanks.

Excellent write up !